Multi-frame Motion Segmentation for Dynamic Scene Modelling

Published in The 20th Australasian Conference on Robotics and Automation, 2018

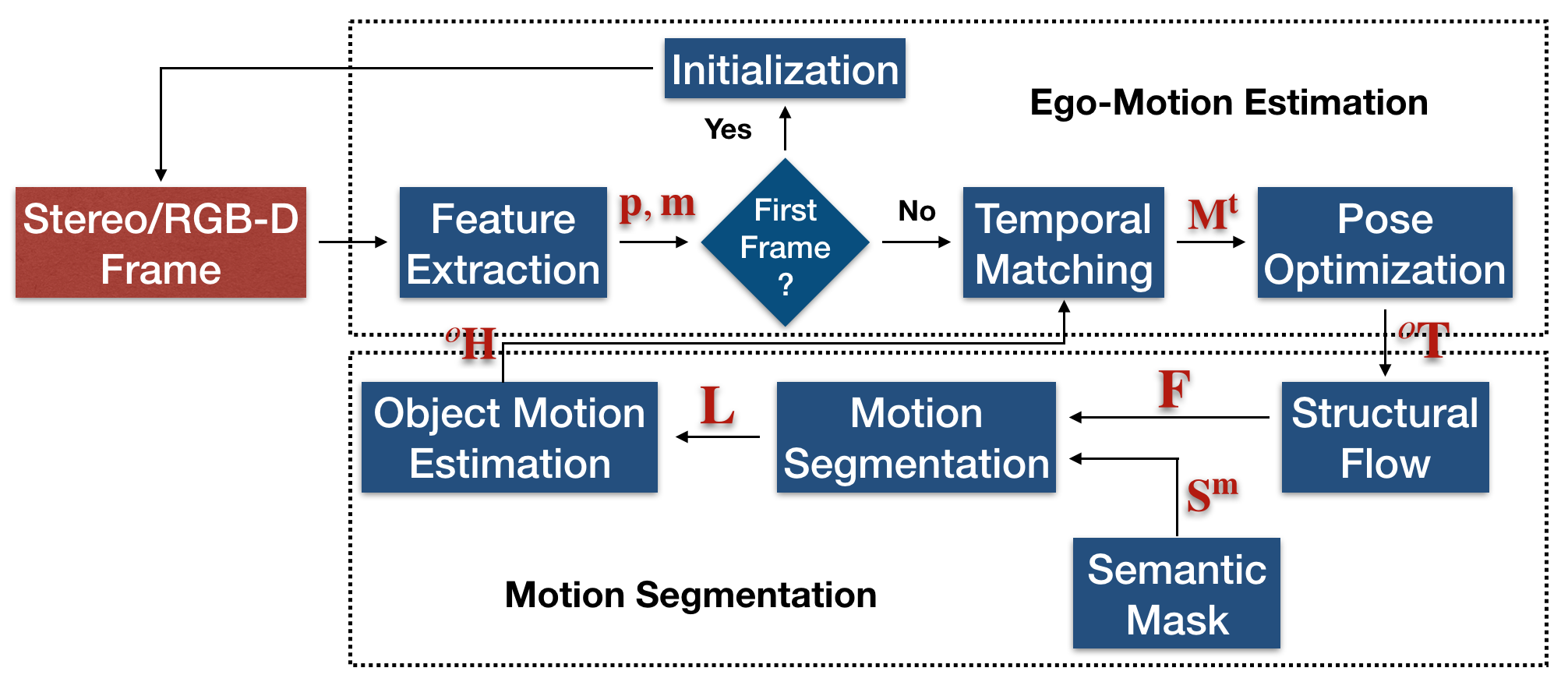

Abstract. Motion segmentation is a fundamental problem in dynamic scene understanding. Although it has been a long-term studied topic, motion segmentation is still not successfully applied in real-life challenging scenarios, such as autonomous or assistant driving. Based on such consideration, this paper proposes a robust solution to motion segmentation for urban driving scenes, by addressing two main challenges which are not fully explored in previously proposed algorithms: (1) detect, segment and track motions simultaneously without prior knowledge of feature trajectories or number of motions but with the help of semantic scene labeling (2) provide a robust solutions in strong perspective scenes with occlusion, by formulating the problem using a novel motion model of dynamic points used in the re-projection error minimization within a stereo/RGB-D setup. The proposed approach is carefully evaluated on the virtual KITTI dataset with ground truth, and tested on the real KITTI dataset.

Reference:

- J. Zhang and V. Ila, “Multi-frame Motion Segmentation for Dynamic Scene Modelling,” The 20th Australasian Conference on Robotics and Automation (ACRA). Australian Robotics & Automation Association, 2018.